Methodology

The Substrate Geometry framework rests on a small number of physics oracles — contact distribution, Hertzian stress, frictional thermal, Archard wear, Basquin fatigue, equilibrium count, constant width — that score candidate primitives against operational invariants. Each oracle is, separately, a physics simulator with its own approximation regime, convergence behavior, and failure modes. The methodology paper is the work of characterizing those regimes empirically rather than asserting them theoretically. Five load-bearing claims are documented, each with two or more independent empirical data points, alongside six explicit non-claims and six deferred findings preserved for theoretical follow-up.

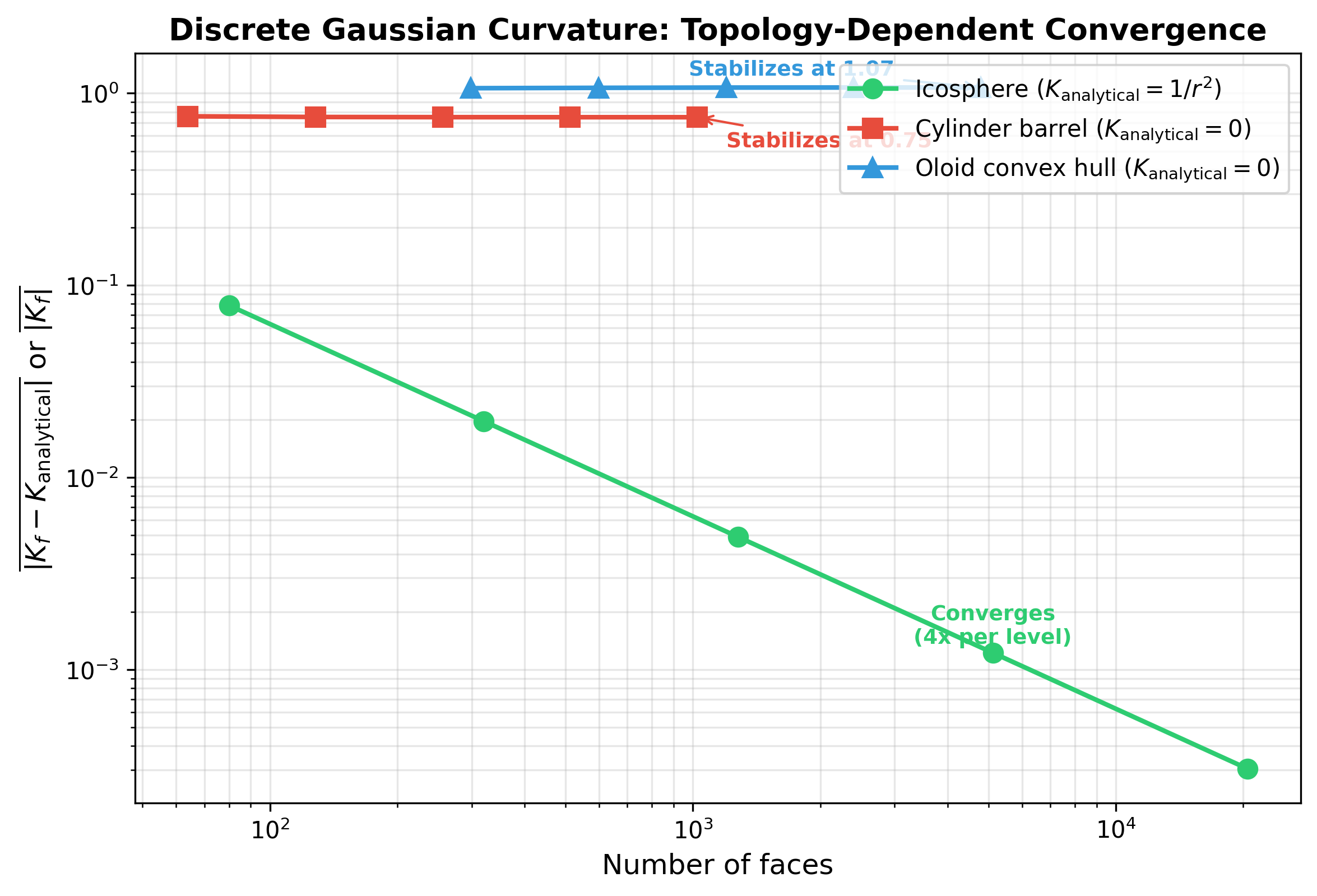

Curvature bias on developable surfaces

The discrete Gaussian curvature estimator, using the standard angle-deficit method, produces |Kf| = 1.07 on the oloid mesh at all resolutions tested. The analytical Gaussian curvature of the oloid is identically zero, since the oloid is developable. The estimator does not converge with resolution. SDS shifts by 17% when the analytical value is substituted for the estimator output. This is a topology-mismatched failure mode of the standard discrete K estimator on developable surfaces, and to the program's knowledge it is documented here for the first time in this specific form.

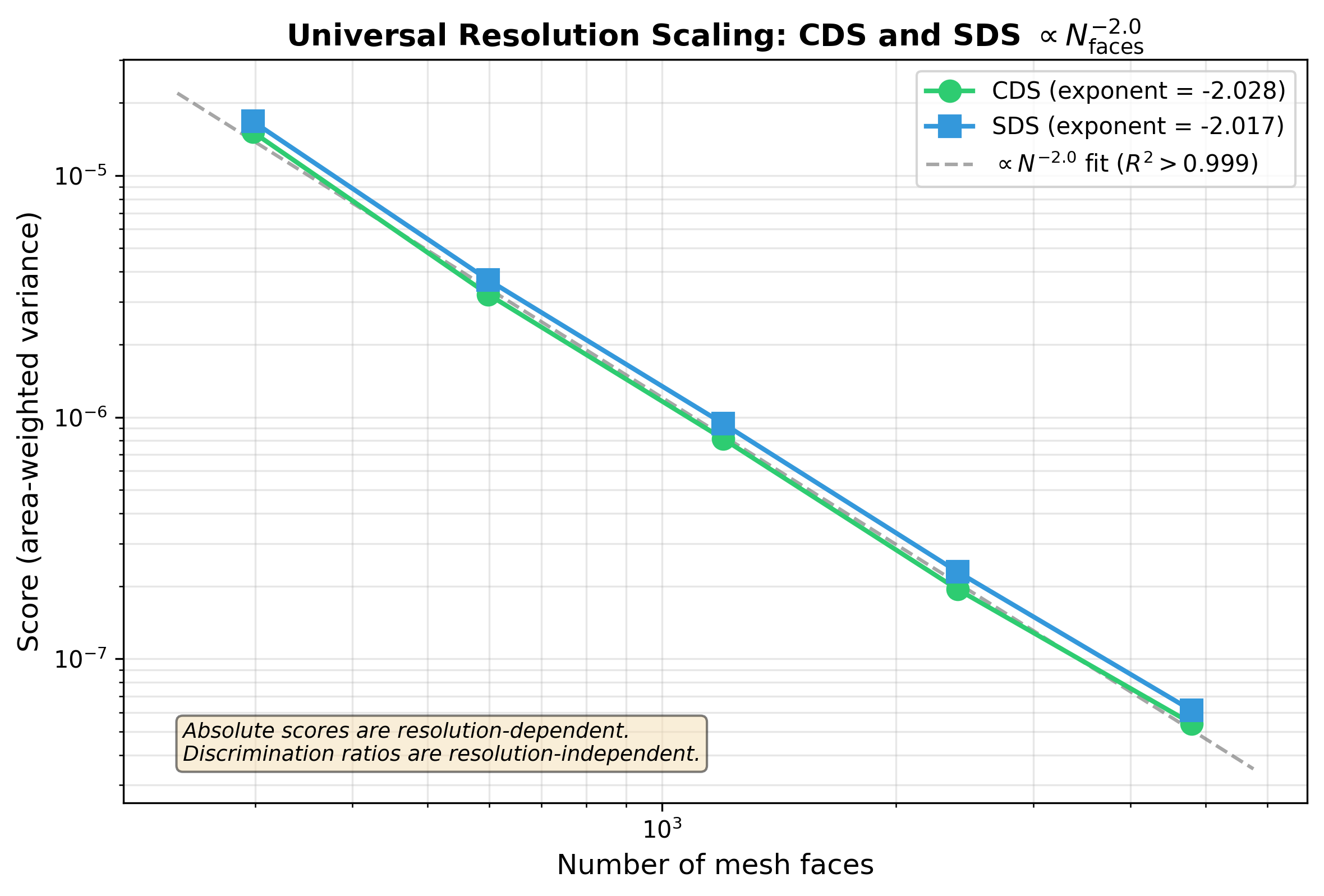

Resolution scaling is universal across variance-based metrics

The variance-based oracle outputs share a resolution-scaling family. CDS scales as resolution−2.028, SDS as resolution−2.017, with the difference (0.011) within measurement precision across the tested resolution range. ECS, a topological metric, is confirmed resolution-independent above 25,600 faces. The implication for cross-oracle comparison: variance-based oracle outputs require resolution normalization before quantitative comparison across oracles is meaningful; topological oracle outputs do not.

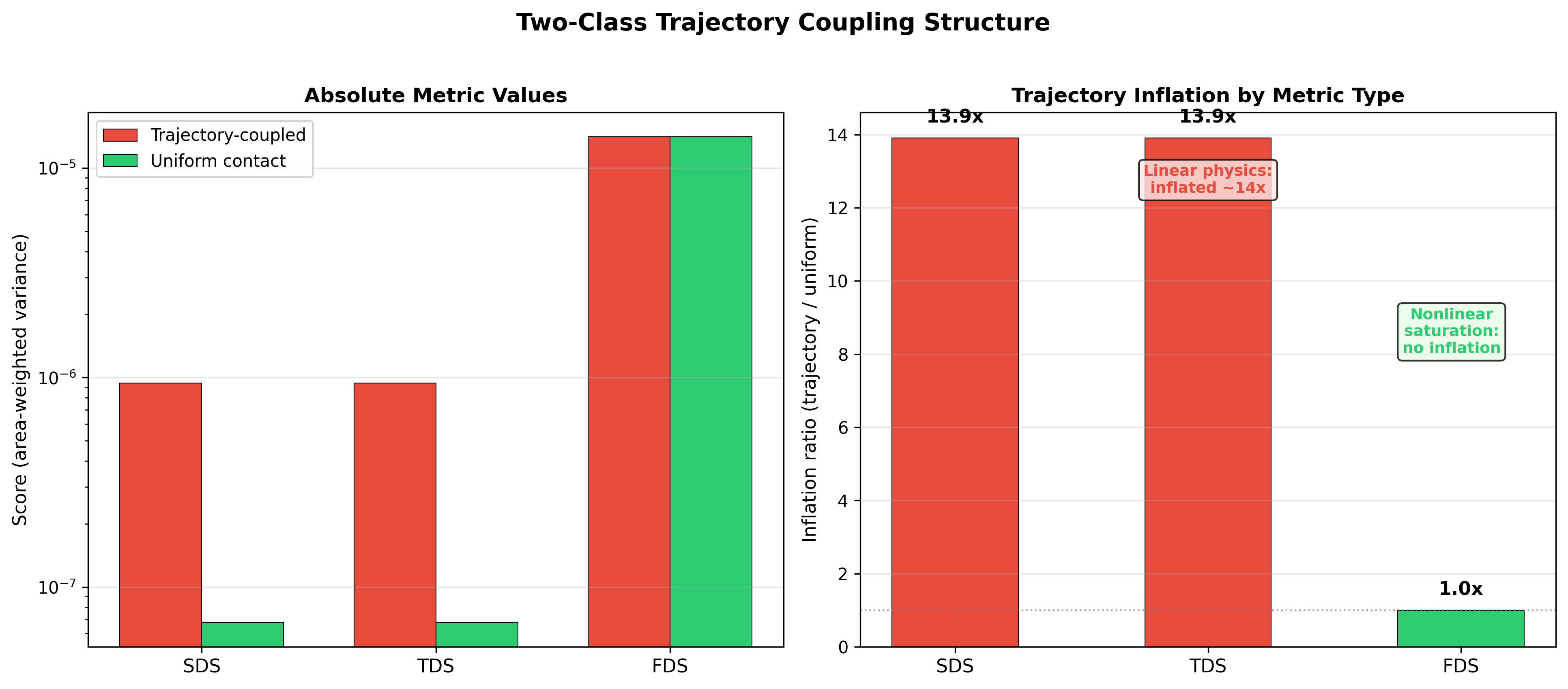

Trajectory coupling inflates linear metrics

Linear metrics inflate by a factor of 13.9× when trajectory-coupled to a shared rolling trajectory rather than evaluated on a geometry-only basis. Nonlinear saturation metrics do not (FDS inflation factor 1.0×). Paper I's original SDS/CDS = 0.98 transfer claim was partly an artifact of this shared-trajectory coupling. The v2 revision applies the correction and reframes geometry-only SDS to 4.8 × 10⁻⁸ while preserving the 58× discrimination headline. Trajectory-coupling awareness is now a precondition for any cross-oracle inference; the discipline is inherited by Papers III and IV as part of their standard protocol.

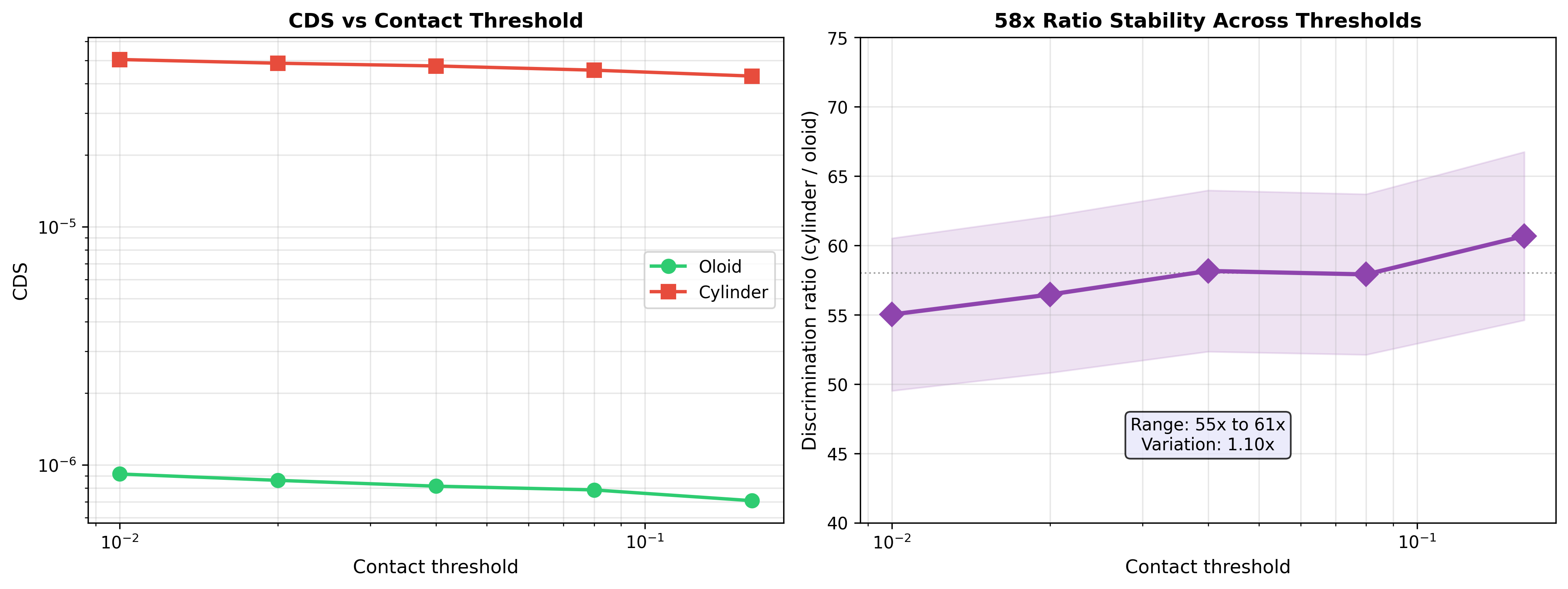

Threshold robustness

A 16× range of contact-threshold variation produces only a 10% variation in the headline discrimination ratio. The 58× CDS finding is robust to the threshold choice. This addresses the obvious skeptical question of whether the discrimination result depends on a specific contact-threshold calibration; it does not.

Discrete Gauss–Bonnet holds in integral

The discrete Gauss–Bonnet theorem holds exactly on the oloid mesh: ∑ K × A = 4π to ratio 1.000000. The estimator integrates correctly even though it fails pointwise. A 7.8% discrepancy between predicted mean K and observed reflects vertex-to-face averaging effects. The finding is preserved as a deferred theoretical follow-up rather than resolved in the present methodology paper. The honest position: the estimator's pointwise failure is documented and corrected; its integral consistency is preserved as a structural observation whose theoretical implications exceed the scope of the present work.

Operating principles

The methodology paper enforces five operating principles that propagate to all subsequent work in the program. These are not stylistic preferences; they are the discipline that lets the framework's coherence claims be load-bearing.

- Falsification criteria committed before runs. Each paper has a pre-registered acceptance threshold for its load-bearing claims, written down before data collection begins. The discipline prevents post-hoc threshold adjustment.

- Adversarial audit per paper. Each paper goes through a parallel-session adversarial review pass to identify weakest assumptions before submission. The audit produces a written list of attack vectors and the paper's response to each.

- Honest negatives reported prominently. The Neovius topological bottleneck failure in Paper IV, the discrete K estimator bias in the methodology paper, the trajectory-coupling artifact in Paper I v1 are all surfaced in the main body, not buried in limitations sections. Honest negatives compound credibility over time; buried negatives compound the opposite.

- Scope preservation during drafting. Claims that emerge during writing but were not pre-registered go in a scope_creep_log rather than into the paper. The log is preserved and surfaces in subsequent work as appropriate, but never as a new claim retroactively grafted onto the current paper.

- Frozen codebase for published work. Code that underlies a published paper is never modified after publication. Subsequent work goes in separate folders that sandbox each paper's reproducibility. The discipline preserves the historical record of what was actually run when the paper was submitted.

Recognition of methodological discipline is what shifts an evaluator's question from "is this real" to "what does this find."

The methodology paper is the strongest credentialing artifact in the program for skeptical audiences. The framework's coherence claims rest on the measurement apparatus being characterized, not on the headline numbers being large.